FP16 inference with Cuda 11.1 returns NaN on Nvidia GTX 1660 · Issue #58123 · pytorch/pytorch · GitHub

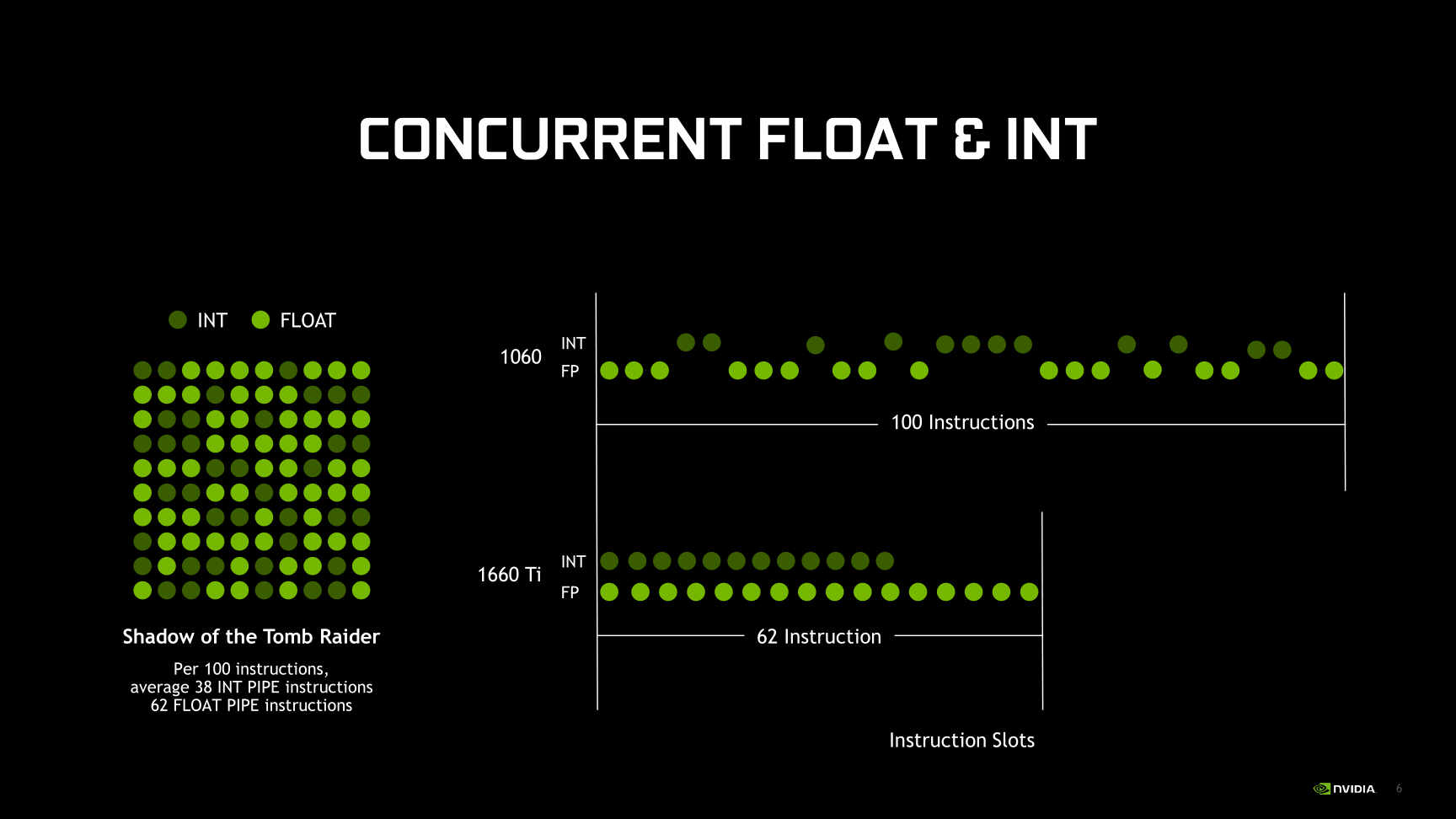

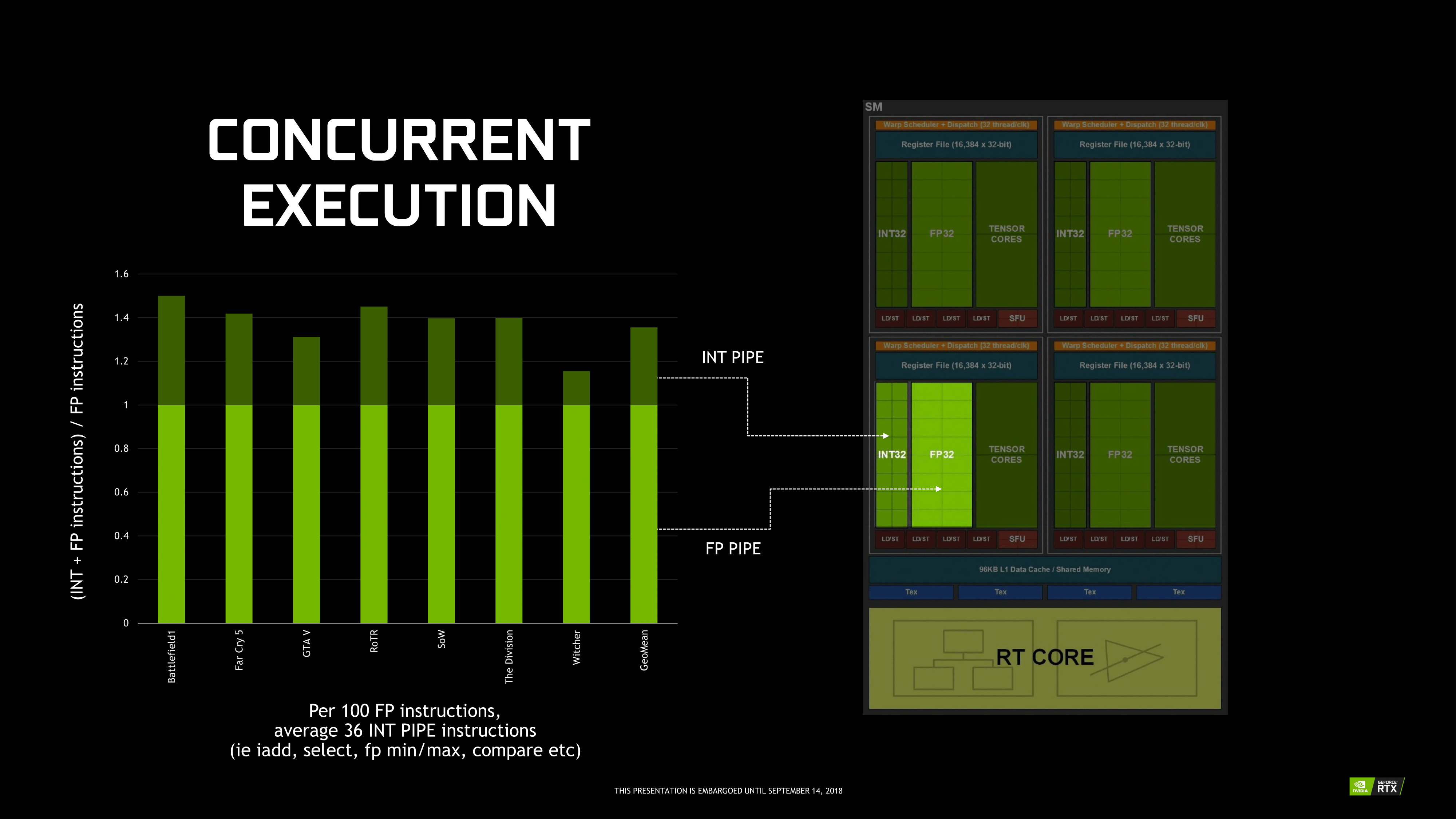

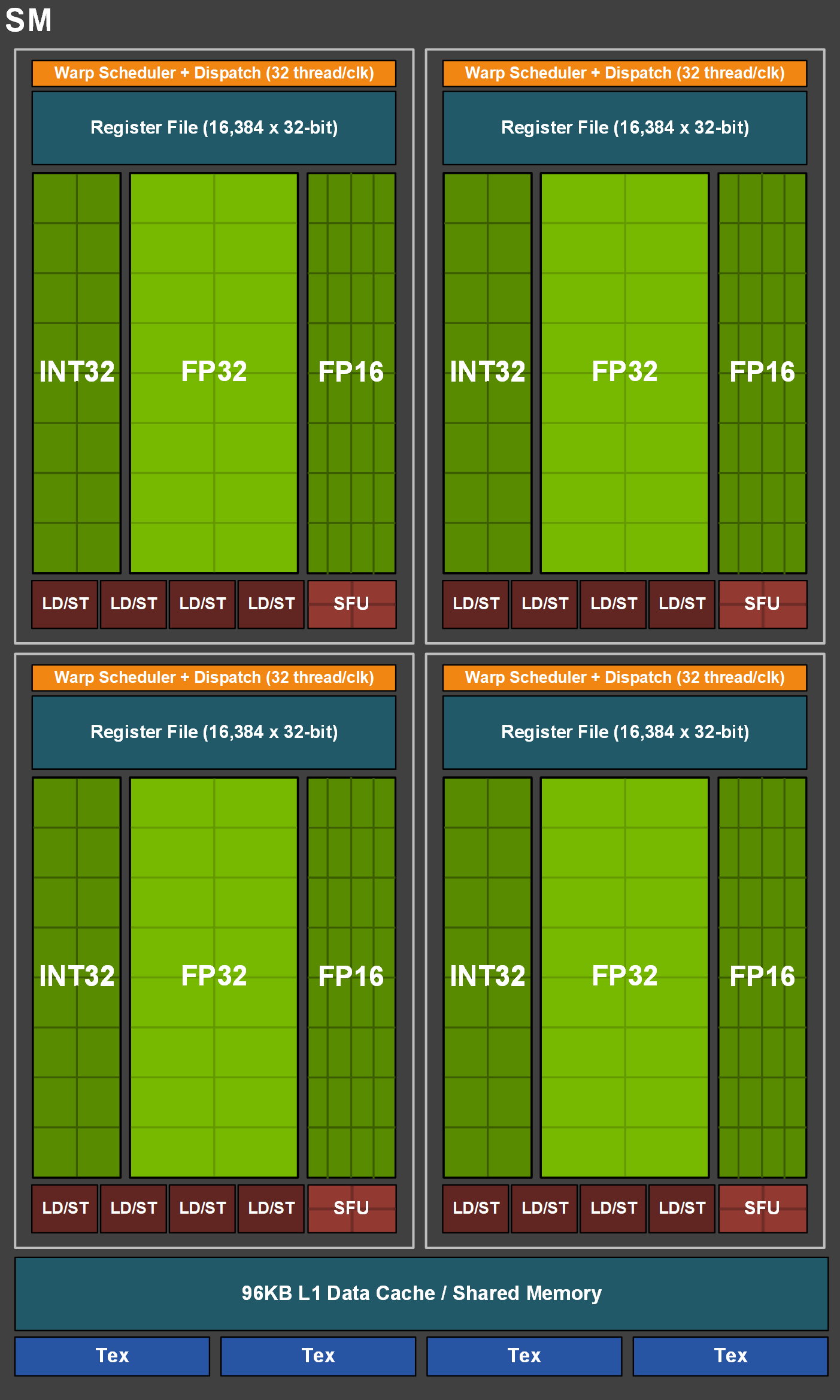

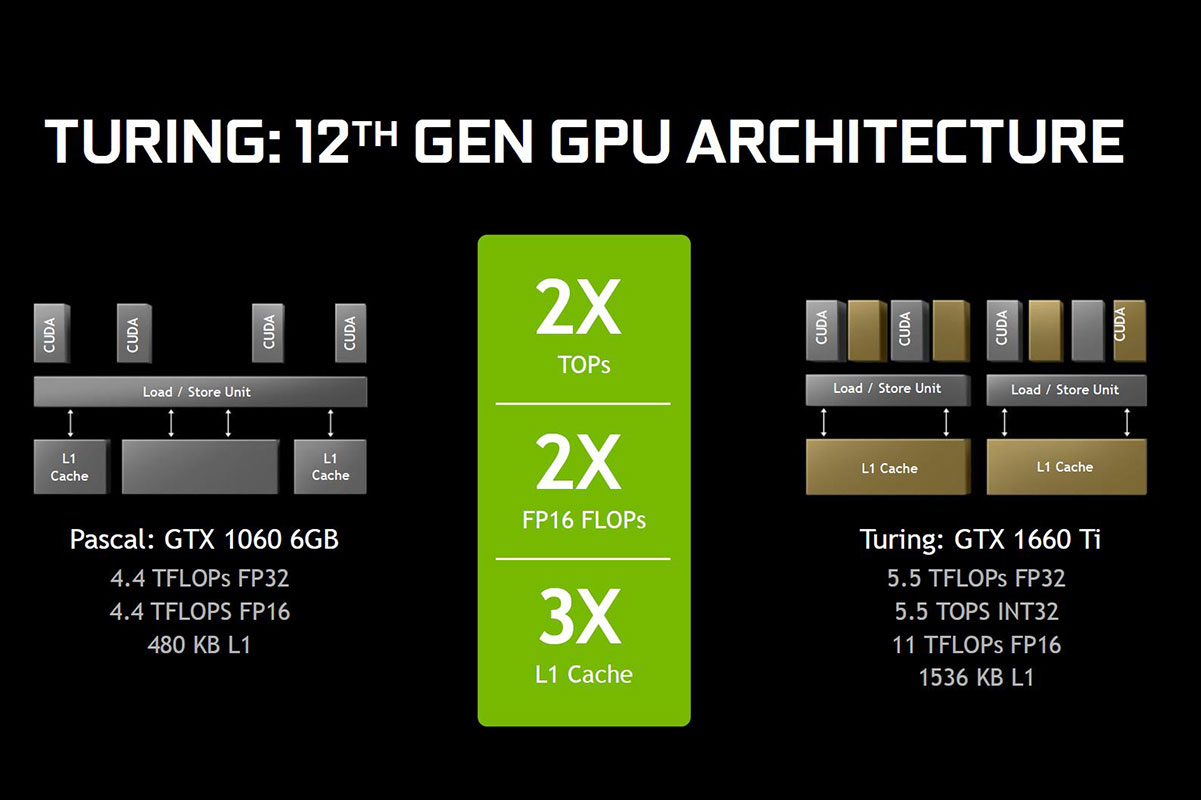

TU116: When Turing Is Turing… And When It Isn't - The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

List of NVIDIA Desktop Graphics Card Models for Building Deep Learning AI System | Amikelive | Technology Blog

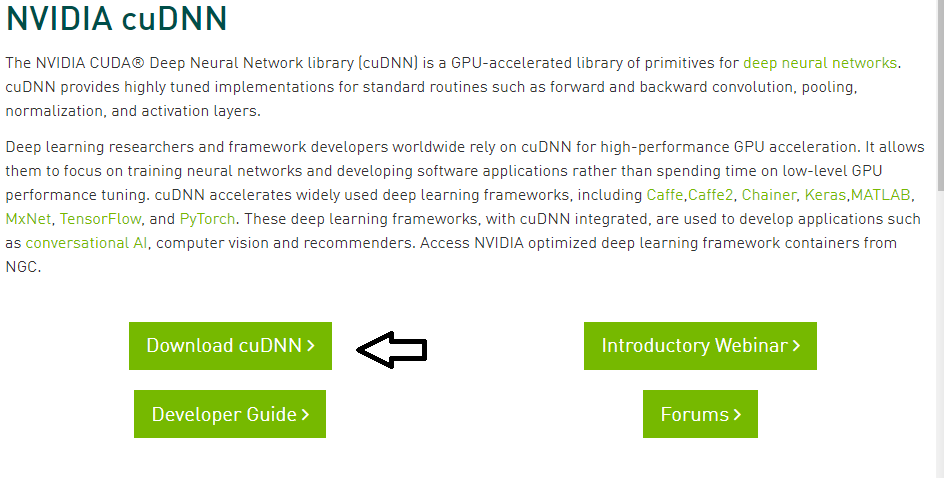

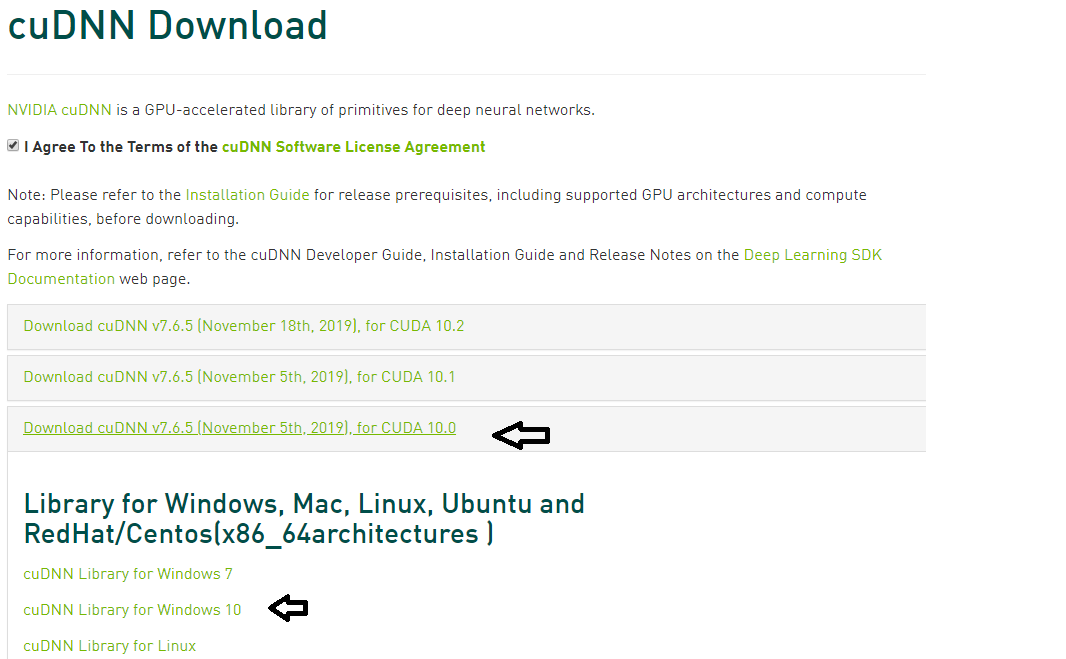

Install Tensorflow-GPU 2.0 with CUDA v10.0, cuDNN v7.6.5 for CUDA 10.0 on Windows 10 with NVIDIA Geforce GTX 1660 Ti. | by Suryatej MSKP | Medium

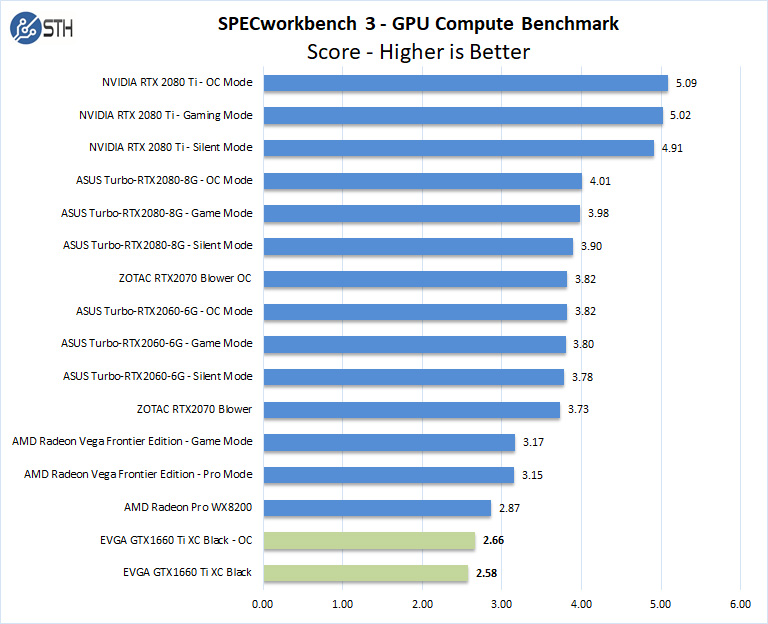

TU116: When Turing Is Turing… And When It Isn't - The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

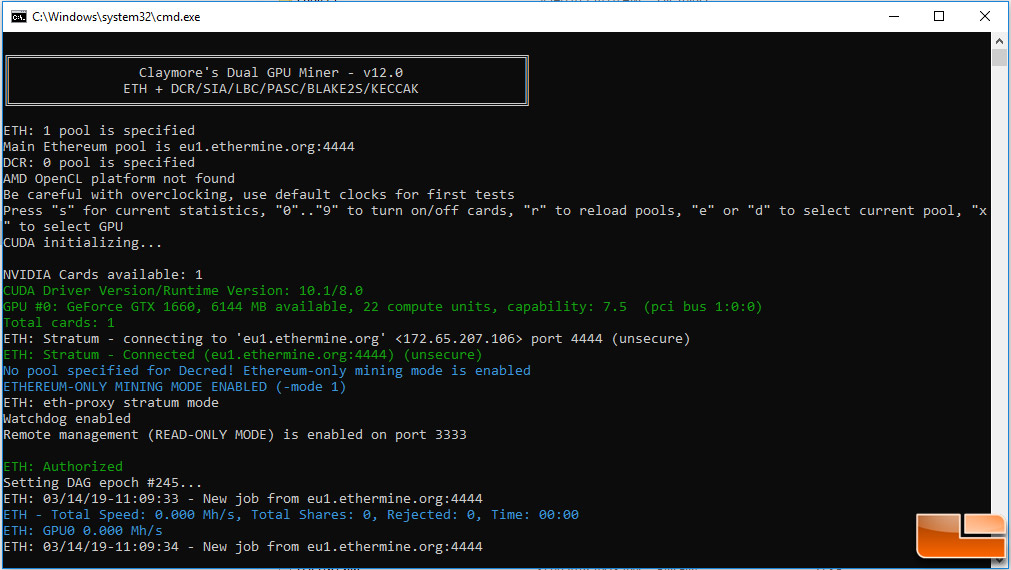

Install Tensorflow-GPU 2.0 with CUDA v10.0, cuDNN v7.6.5 for CUDA 10.0 on Windows 10 with NVIDIA Geforce GTX 1660 Ti. | by Suryatej MSKP | Medium

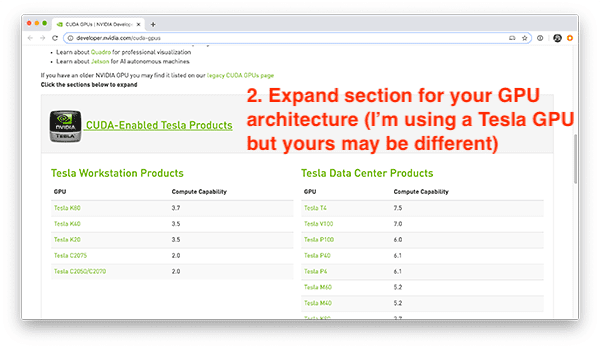

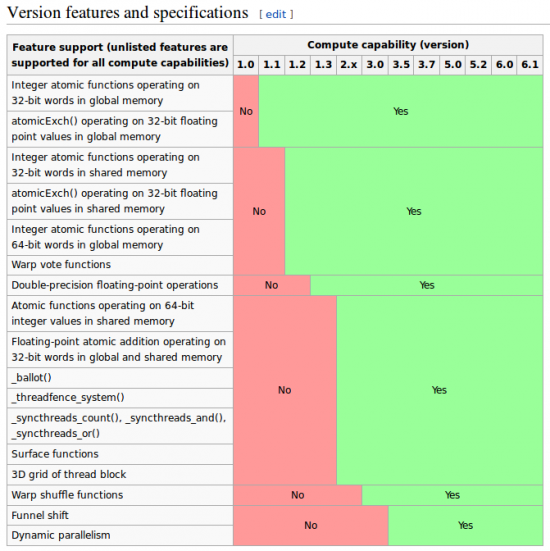

python - GPU Compute Capability 3.0 but the minimum required Cuda capability is 3.5 - Stack Overflow

TU116: When Turing Is Turing… And When It Isn't - The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

![GPU Computing] NVIDIA CUDA Compute Capability Comparative Table | Geeks3D GPU Computing] NVIDIA CUDA Compute Capability Comparative Table | Geeks3D](http://www.ozone3d.net/public/jegx/201006/nvidia_cuda_compute_capability_descr_01.jpg)

![GPU Computing] NVIDIA CUDA Compute Capability Comparative Table | Geeks3D GPU Computing] NVIDIA CUDA Compute Capability Comparative Table | Geeks3D](http://www.ozone3d.net/public/jegx/201006/gpucapsviewer_compute_capability.jpg)