Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

Training Deep Neural Networks on a GPU | Deep Learning with PyTorch: Zero to GANs | Part 3 of 6 - YouTube

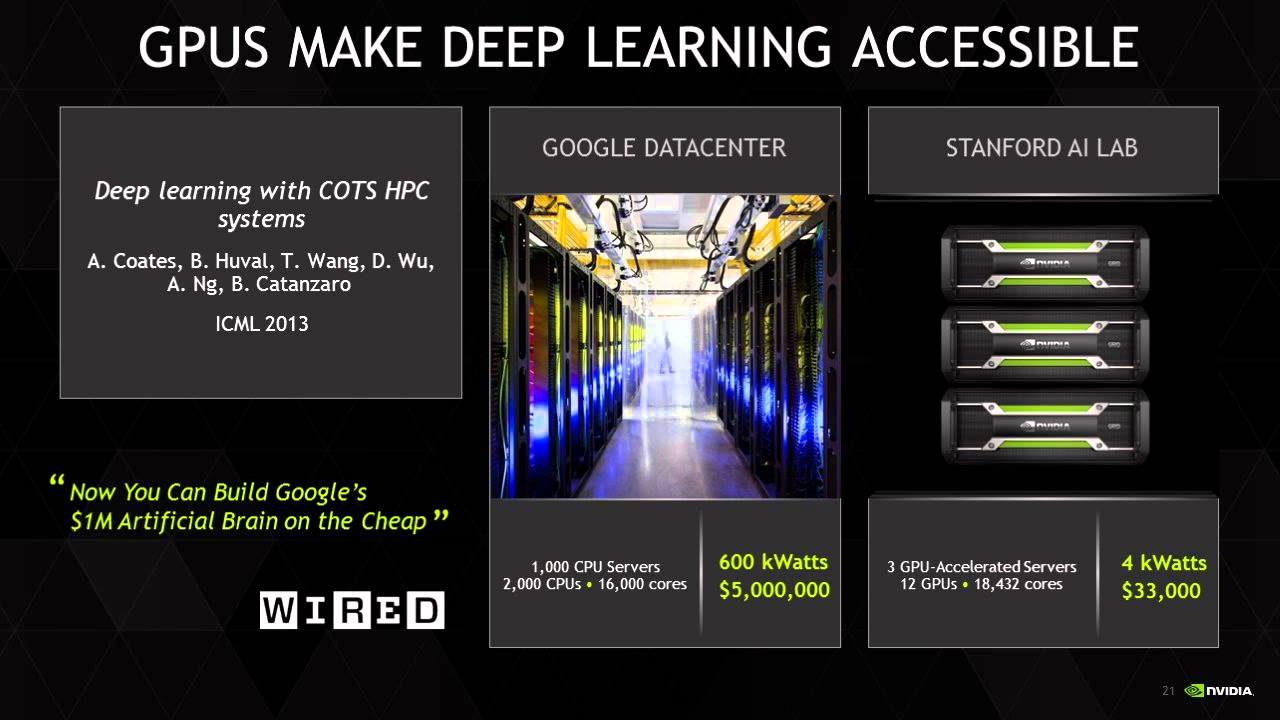

Researchers at the University of Michigan Develop Zeus: A Machine Learning-Based Framework for Optimizing GPU Energy Consumption of Deep Neural Networks DNNs Training - MarkTechPost